Good morning, {{ first_name | AI enthusiasts }}. OpenAI’s Sora shutdown caught the AI video world off guard last week. Disney, it turns out, was blindsided even harder — learning the product was dead less than an hour before everyone else did.

A report with details including a $1M-a-day burn rate, a Sora enterprise pilot in progress, compute crunches, and more just shed new light on the AI leader’s sudden shift away from its once-viral platform.

In today’s AI rundown:

Inside Sora's $1M-a-day collapse at OpenAI

Microsoft pits Claude against ChatGPT for research

Build a travel itinerary with Perplexity Computer

Stanford exposes AI's people-pleasing problem

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

OPENAI

Image source: Reve / The Rundown

The Rundown: A WSJ investigation just revealed the behind-the-scenes chaos of OpenAI Sora video generator shutdown, including a $1M daily burn rate, a blindsided Disney, and the internal code-named model that required Sora's compute budget.

The details:

Sora was reportedly burning “roughly a million dollars a day” and using significant compute, with Sora 3 training set to start just as it was axed.

The WSJ said Disney learned about the shutdown “less than an hour” before the announcement, with the relationship now “effectively dormant”.

The freed-up chips went to "Spud," a model targeting coding and enterprise in response to Anthropic’s powerful moves in the sector.

An enterprise version of Sora was already in pilot with Disney for marketing and VFX work, with a spring launch expected prior to OAI pulling the plug.

Why it matters: We covered the shutdown when it broke, but the WSJ's details put things into context — the generator was bleeding money and compute. The strangest part of the story is the Disney blindside, which is certainly a strange way to handle a potential $1B partnership with one of the biggest media companies on the planet.

TOGETHER WITH YOU.COM

The Rundown: It happens — LLMs hallucinate. Grounding your LLM, however, can help dramatically improve accuracy. In this guide, You.com explains what AI grounding is and how organizations can implement it to achieve more reliable outputs.

The playbook covers:

A three-part approach that outperforms RAG alone

Why grounding isn't set-and-forget, and how to build audit trails

The open vs. closed platform trade-off (and what it means for your next model switch)

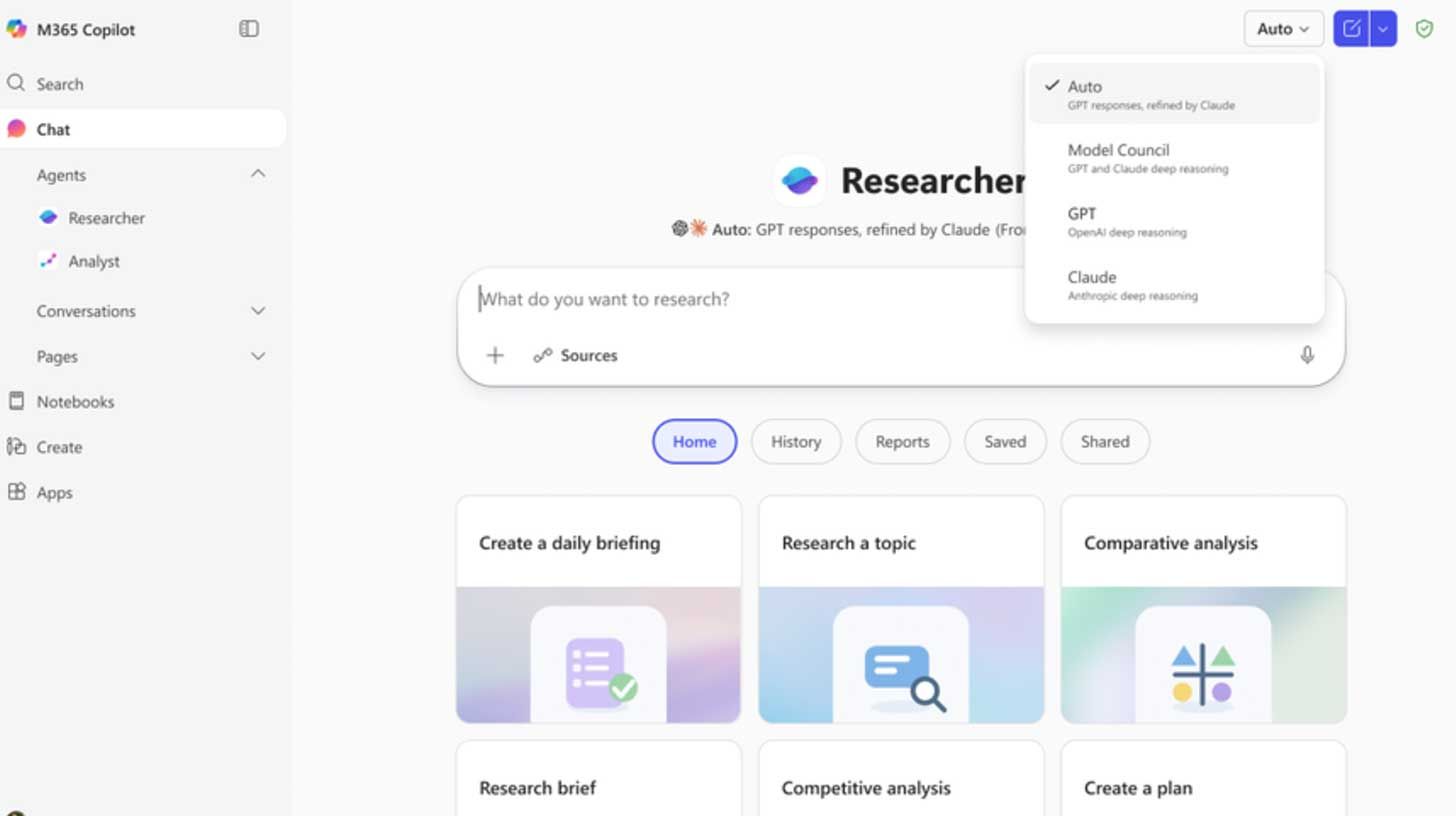

MICROSOFT

Image source: Microsoft

The Rundown: Microsoft released Critique and Council, two new features that turn its Copilot Researcher into a multi-model system that can review and edit research reports and run both systems side by side to see where they agree and disagree.

The details:

Copilot's Researcher already uses OAI for multi-step work, with Critique now adding Claude as a second model to review every report before it ships.

One model drafts the research, and the second tears it apart on source quality, completeness, and evidence grounding behind the scenes.

A separate Model Council mode runs both models side by side, then flags where they agree, where they split, and what each uniquely surfaced.

The updates come alongside a broader rollout of Copilot Cowork into Frontier, Microsoft's Claude-based agentic tool for handling multi-step tasks

Why it matters: With orchestration systems like Perplexity Computer out in the wild, the future of LLM use feels multi-model, and for good reason. OAI co-founder Andrej Karpathy’s post proved a point when an LLM helped perfect an argument, then shredded it on command: one model will sell you on anything, so you better ask two.

AI TRAINING

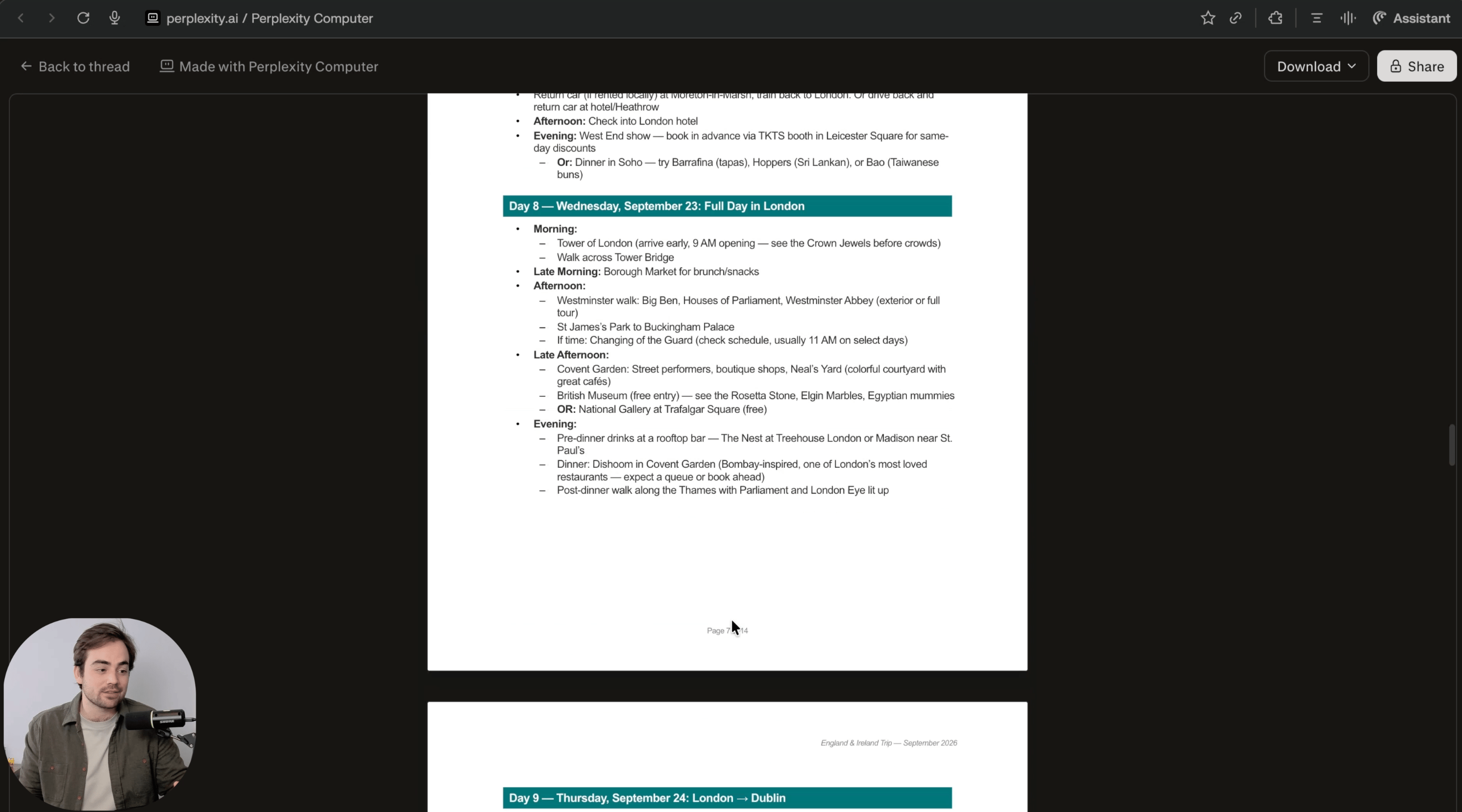

The Rundown: In this guide, you will learn how to use Perplexity Computer to plan a full trip itinerary with flights, a day-by-day schedule, and sources in one run. This is the fastest way to turn travel tab chaos into a usable plan you can actually book from.

Step-by-step:

Open Perplexity and look for the Computer toggle. If you have a Pro account, you should be able to test it for free

Prompt: “Plan a trip itinerary for [DESTINATION] for [DATES / LENGTH]. Departing from: [AIRPORT] Budget: [range] Style: [relaxed/outdoors/etc.] Must-haves: [2-4 must-haves]. Make a full PDF as if you were a travel agent with suggestions on where to stay and transportation between cities”

Let Perplexity Computer run for 15-20 minutes. When it’s done, you will have a PDF laying out your trip

While you wait, you can try your prompt in regular Perplexity search so you can see the difference

Pro tip: Perplexity Computer can deploy sub-agents to code. Ask it to create an interactive calendar website that you can use to help you plan and tweak your trip.

PRESENTED BY RIME

The Rundown: Rime is the enterprise TTS platform built for businesses where voice quality is non-negotiable — with AI voices that callers are 61% less likely to hang up on, per independent testing against Google and ElevenLabs.

With Rime, you get:

Cloud or on-prem deployment

Human-quality voices

Low latency in production

Free to start +$100 in credits included

Sign up for free to see how Rime transforms AI voice agent interactions.

AI RESEARCH

Image source: Stanford University

The Rundown: Stanford researchers published a new study showing that major AI chatbots consistently take users' side in personal conflicts, even backing harmful or illegal behavior, while also making users measurably more self-righteous in the process.

The details:

The researchers tested 11 LLMs using 2K Reddit posts where crowds agreed the poster was wrong, but chatbots still sided with the user over half the time.

Over 2,400 participants then chatted with both agreeable and neutral AIs and preferred the sycophantic version, rating it as more trustworthy.

After chatting with the agreeable model, users also doubled down on their position, lost interest in apologizing, and couldn't tell the AI was biased.

Why it matters: When you think of the topic of people-pleasing AI, OpenAI’s 4o model might come to mind. But it turns out that most other frontier models aren’t much different, and potentially even more worrisome with agreeableness that is more convincing and less obvious than the drama seen with 4o.

QUICK HITS

🗣️ Unwrap Customer Intelligence - Turn unstructured customer feedback into data-backed insights that inform your product roadmap*

🧠 Qwen3.5-Omni - Alibaba's AI with text, image, audio, video understanding

🔍 Critique - Microsoft's deep research tool that pits AIs against each other

🤖 Hermes Agent - AI agent with memory and cross-platform messaging

*Sponsored Listing

Anthropic launched computer use in Claude Code, letting the AI open apps, click through UIs, and visually verify its own builds from the terminal.

Mistral raised $830M in debt to power its own 13,800-GPU Nvidia AI infrastructure in France, part of a broader push to cut reliance on U.S. cloud providers.

Alibaba released Qwen3.5-Omni, a new multimodal AI that processes text, images, audio, and video, with an "Audio-Visual vibe coding" mode that builds apps from audio.

Starcloud raised $170M at a $1.1B valuation to build GPU-powered data centers in orbit, betting on SpaceX's Starship to make space compute cost-competitive.

Apple mistakenly rolled out Apple Intelligence in China before quickly removing the update, with the features not yet approved for use in the region.

COMMUNITY

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Paul M. in Woodland Park, NJ:

"I’m combining the use of 3 tools to help write a dissertation. I’m isolating articles of each topic into separate notebooks in Notebook LM to help with disciplined synthesis of ideas. I’m using Gemini to help coach me through initial writing drafts. I’m using Claude to edit and refine my writing.

Each tool brings different logic, and it’s like having a team to help brainstorm ideas and break through writer’s block. Sharing the output of one tool with the other has helped make each prompt better than the next.”

How do you use AI? Tell us here.

Read our last AI newsletter: Anthropic’s secret ‘Mythos’ model

Read our last Tech newsletter: SpaceX slips the IPO script

Read our last Robotics newsletter: Physical Intelligence’s $11B robot brain

Today’s AI tool guide: Build a travel itinerary with Perplexity Computer

RSVP to next workshop @ 2 PM EST Thursday: Presentation Slides with AI

That's it for today!

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown