Good morning, {{ first_name | AI enthusiasts }}. Last summer, Mark Zuckerberg handed Alexandr Wang the keys to a brand new lab with some expensive, freshly poached talent from top rivals. Today, we got the first look at what that money built.

Meta's Muse Spark isn't topping every benchmark, but it puts the tech giant squarely back in the game — with the resources, data, and 3B+ daily users to build on from here.

In today’s AI rundown:

Meta Superintelligence Labs ships first model

HeyGen’s Avatar V solves AI’s identity drift

Build an automated ad generator with this tool

Anthropic simplifies the agent-building system

4 new AI tools, community workflows, and more

LATEST DEVELOPMENTS

META

Image source: Meta

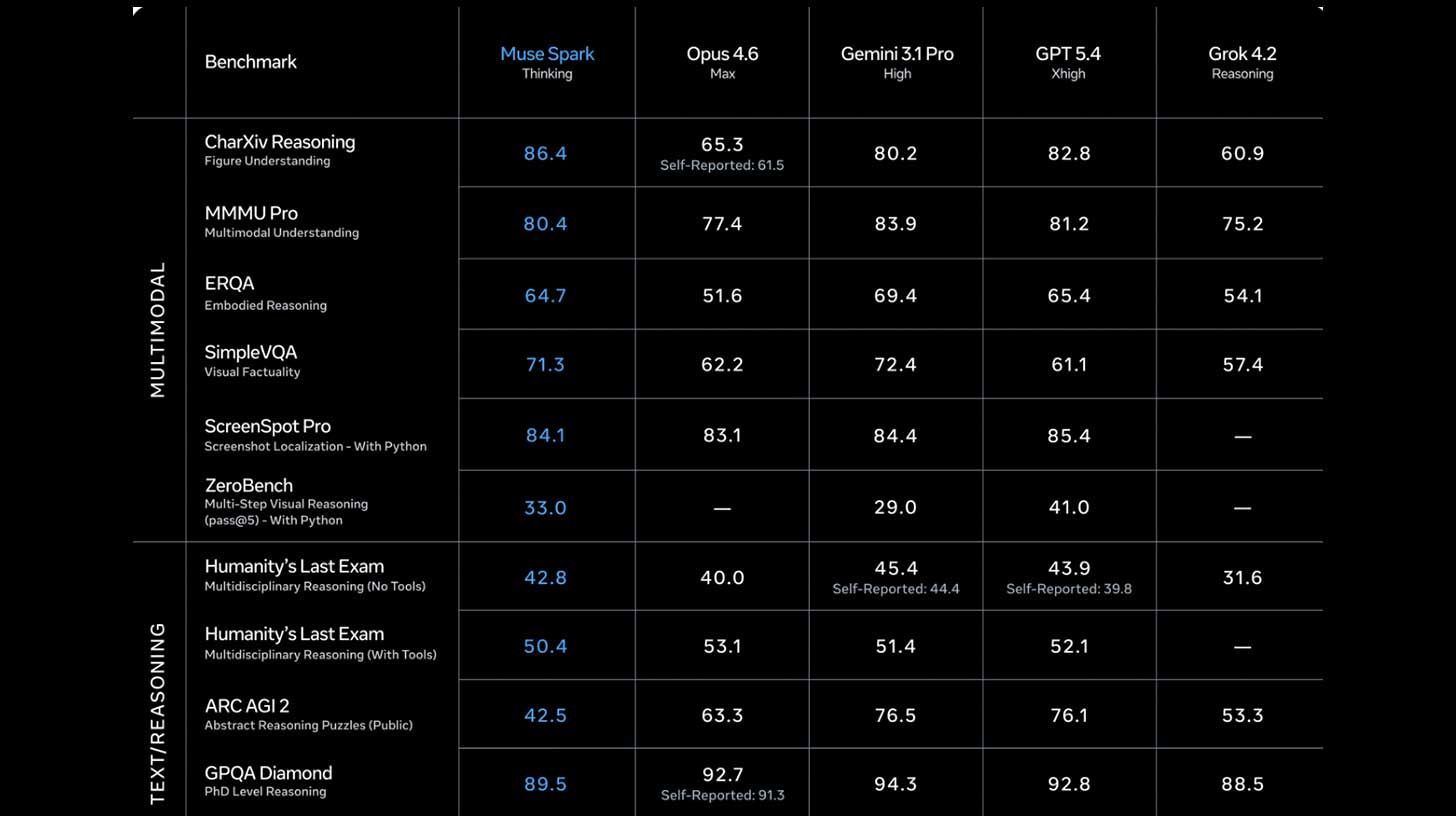

The Rundown: Meta’s Superintelligence Labs just rolled out Muse Spark, a multimodal reasoning model that marks the highly anticipated debut release of Alexandr Wang’s high-profile division assembled last summer.

The details:

Muse Spark handles voice, text, and image inputs, with a contemplating mode that pits multiple agents against each other on hard problems.

The model’s benchmarks are competitive with frontier rivals like Opus 4.6 and GPT 5.4 on reasoning, though it lags in coding and tests like ARC-AGI 2.

Muse Spark is particularly strong in health reasoning, with the company prioritizing the area as part of its ‘personal superintelligence’ mission.

Unlike the Llama family, Muse Spark is proprietary, with Meta saying it hopes to open-source future versions but has not committed to a timeline.

Wang took over Meta Superintelligence Labs 9 months ago after Zuck acquired Scale AI for $14.3B, saying the team “rebuilt our AI stack from scratch".

Why it matters: Meta is back in the game. While still sitting below the top models, Muse Spark is a serious change from where Meta sat with its Llama family. It may not break the internet, but with tons of resources, valuable data across its platforms, and billions of users, Meta’s AI efforts just took a step in the right direction.

TOGETHER WITH BLOTATO

The Rundown: Claude's Cowork tool can dramatically speed up your workflow — and this free beginner course walks you through everything from setup to building a fully functional AI marketing team inside the platform.

In this course, you’ll learn how to:

Setup Cowork

Create Skills and MCP

Build your AI marketing team

Manage social media content calendar

Watch the course and 10x your productivity with Claude Cowork.

HEYGEN

Image source: HeyGen

The Rundown: HeyGen released Avatar V, a new model the company calls “the most realistic AI avatar model in the world,” and claims that it can eliminate identity drift — the tendency for AI-generated faces to stop resembling the user over time.

The details:

The system builds a full video avatar from a short 15-second phone recording, capturing the user’s real facial details, gestures, and movement patterns.

The model also separates identity from appearance for the first time, allowing users to record once, then swap outfits and backgrounds without filming again.

HeyGen says Avatar V outperformed Google's Veo 3.1 on accuracy and lip sync in internal tests, while also beating out Kling and Seedance in blind tests.

Why it matters: Just like image and video models, AI avatars have come a ridiculously long way over the last few years, going from simple mouth movements to mimicking a user’s micro-movements for indistinguishable outputs. While some may scoff at the idea of an ‘AI twin’, the content creation landscape is changing with or without them.

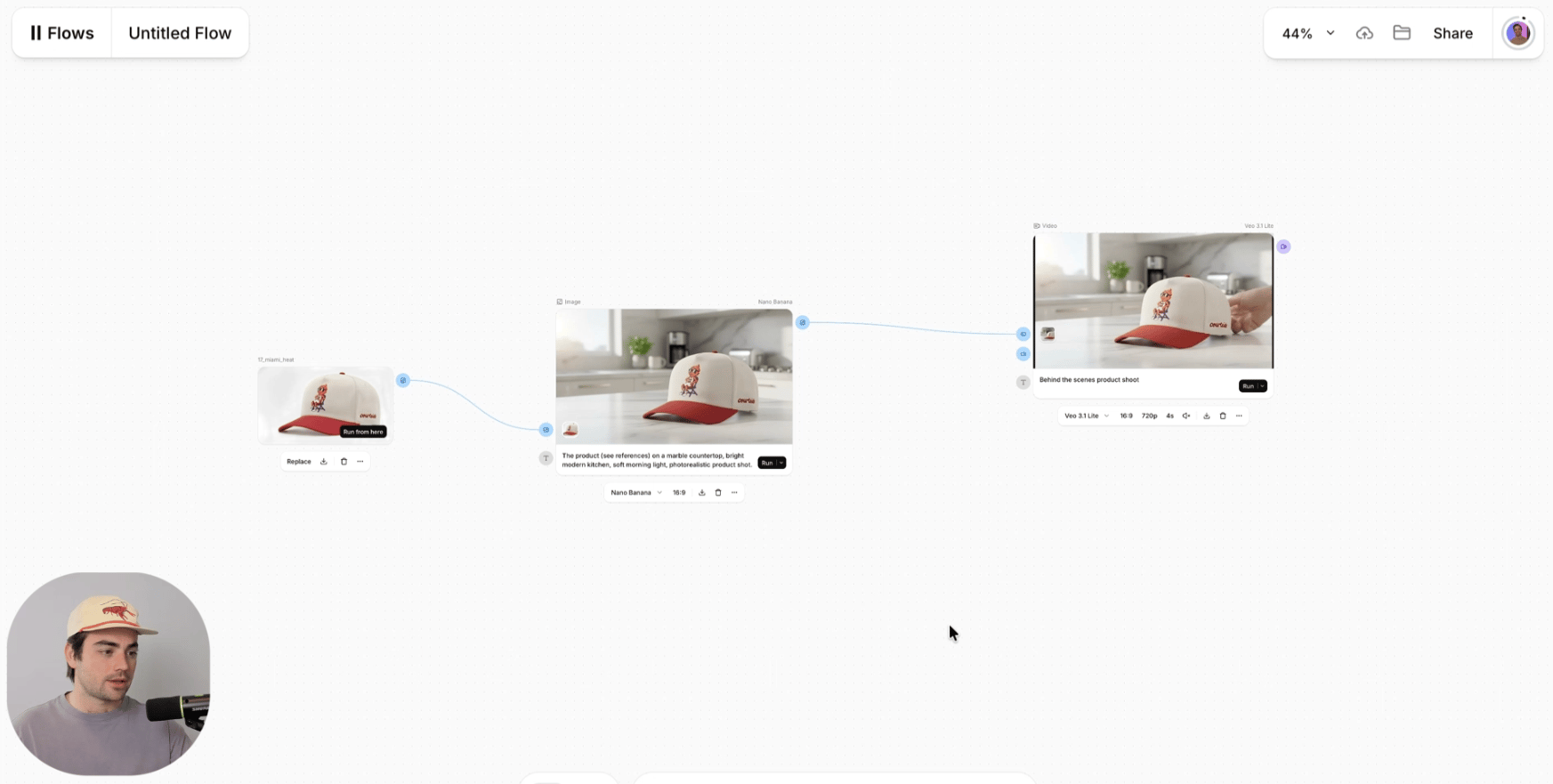

AI TRAINING

The Rundown: In this guide, you will learn how to turn a product photo into a finished video ad using ElevenLabs Flows. It's a new workflow builder that bundles image, video, voice, and music in one place.

Step-by-step:

Open ElevenLabs, click ElevenCreative > Flows, then hit + New Flow. Name it [product line] ad template so you can reuse it

Add an Image Generation node and upload product 1-3 product shots. Prompt with a scene, like: “The product (see references) on a white pedestal, studio product shoot, soft morning light, photorealistic product shot”

Add a Video Generation node, drag a line from the image node's output into the video node's start frame, and prompt: “Slow cinematic push-in on the product, soft morning light drifting across the scene, shallow depth of field”

Click Run on the video node > Run till here to generate an image and video in one go. Then, swap out image/video prompts, and quickly iterate on creatives

Pro tip: For audio, add a Text-to-Speech or Music node and connect it to a Mix Audio node alongside the video. You can also try this on other products by duplicating the canvas and swapping in new images.

PRESENTED BY VANTA

The Rundown: If customers, investors, or your sales team keep asking about SOC 2 and you haven't started yet, Vanta's upcoming live session on April 22 is designed to help you take the first step with confidence — all in just 30 minutes.

The session will cover:

What SOC 2 actually is, when you need it, and the difference between Type I and Type II

Where startups lose time and how to avoid common rework during the process

A practical framework for evaluating tools and auditors without overbuying

Register now for the April 22nd session. Can't make it? Sign up anyway to get the recording.

ANTHROPIC

Image source: Anthropic

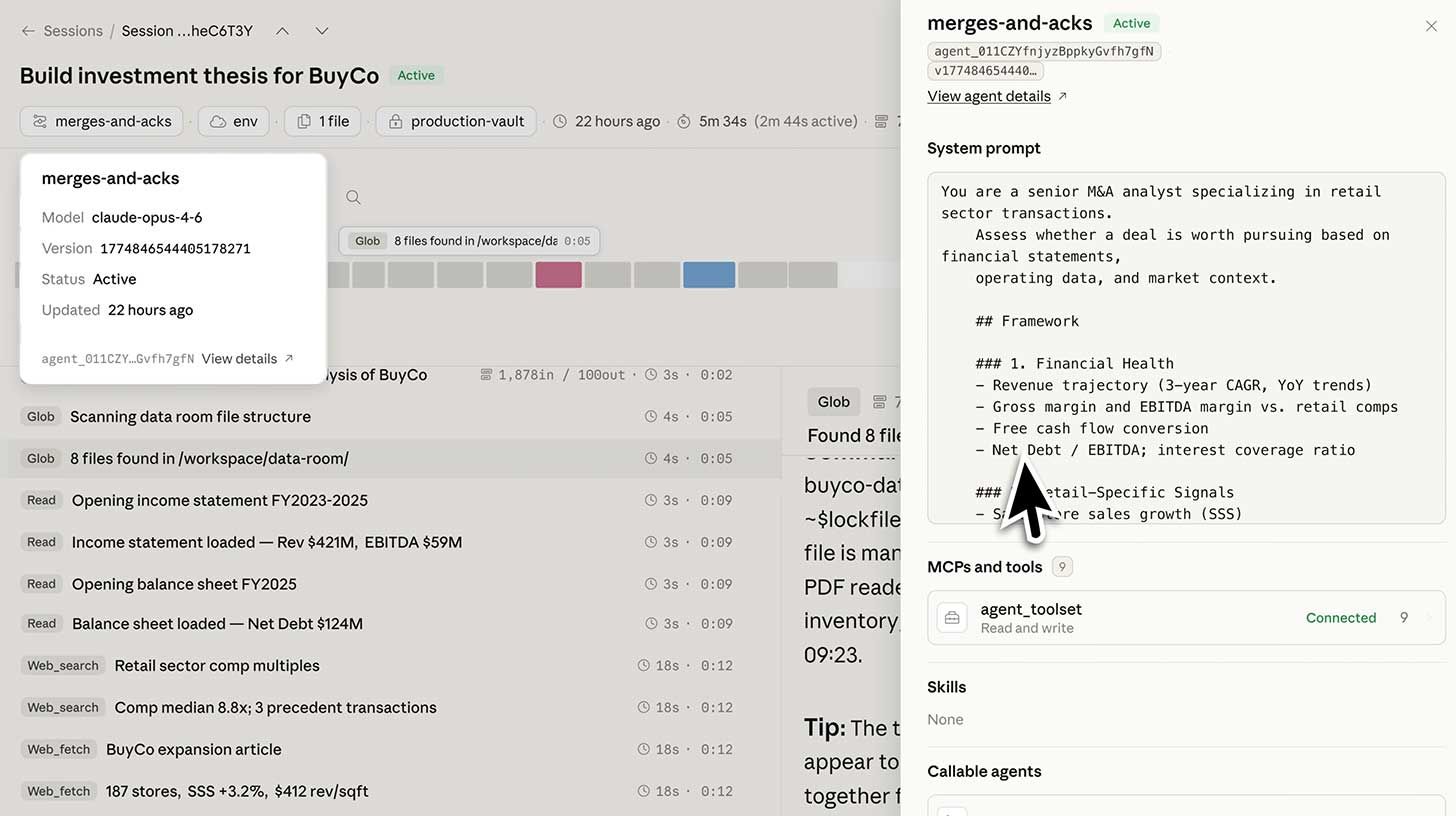

The Rundown: Anthropic opened a public beta for Claude Managed Agents, a new platform that lets developers go from an agent idea to a live product in days — handling all the backend plumbing that used to take engineering teams months to set up.

The details:

Users pick the task, tools, and guardrails, with Managed Agents handling running, securing, and controlling what the agentic system can access.

Agents can work solo for hours without dropping state, with a coordination mode also in preview, letting one agent farm out subtasks to others.

Notion, Rakuten, Asana, and Sentry are early adopters, with Rakuten reportedly setting up agents across five departments in about a week each.

Each agent session costs $0.08 per hour on top of the usual AI usage fee, with users paying based on consumption instead of upfront platform fees.

Why it matters: Anthropic continues to roll out features that eat away at the complexities of users getting the most out of their models and tools. Managed Agents now does the same, simplifying the agentic building process and making it possible for anyone to deploy and control agents without the typical backend headaches.

QUICK HITS

🤖 Scrunch - See how AI interprets your site, run a free audit, and unlock the new way to reach customers*

🧠 Muse Spark - Meta’s multimodal reasoning AI with multi-agent mode

🎥 Avatar V - HeyGen's AI avatar model that generates studio-quality videos

🕹️ Clicky - Open-source AI teacher that lives next to your cursor

*Sponsored Listing

Elon Musk amended his OAI lawsuit to redirect all damages to the nonprofit arm and push Altman off its board, with OAI calling it "a harassment campaign."

Perplexity hit $450M in estimated annual recurring revenue after a 50% monthly jump, driven by its Computer agentic system and usage-based pricing model.

Elon Musk revealed that xAI has seven new models currently in training on its Colossus 2 supercomputer, including massive 6T and 10T parameter systems.

Canva acquired Simtheory and Ortto, adding agentic AI workspace tools and marketing automation to its platform as it pushes end-to-end campaign workflows.

Jeff Bezos’ secretive AI startup Prometheus poached Kyle Kosic, a former xAI co-founder who led the infrastructure team before leaving the startup for OAI in 2024.

OpenAI published a child safety policy blueprint pushing for updated U.S. laws on AI-generated CSAM, stronger reporting, and built-in safeguards to prevent exploitation.

COMMUNITY

Every newsletter, we showcase how a reader is using AI to work smarter, save time, or make life easier.

Today’s workflow comes from reader Lynne B. in the United Kingdom:

"I am a serious amateur photographer. I use in-camera techniques to create expressionist images, and I process the images in Photoshop. I also provide Zoom sessions on my methods for others. Many photographers do not have the facility to create multiple exposures in camera.

I am using ChatGPT to write code for Scripts within Photoshop. These scripts simulate the in-camera multiple exposure process and speed it up. It has taken a while to stop the error codes as the Script area of Photoshop can be temperamental, but the great thing about AI is, it matches my own tenacity with a challenging task."

How do you use AI? Tell us here.

Read our last AI newsletter: Anthropic’s AI is too powerful for the world

Read our last Tech newsletter: This startup wants to hack the night sky

Read our last Robotics newsletter: UBTech offers $18M / year for AI scientist

Today’s AI tool guide: Build an automated ad generator with this tool

Watch our last live workshop: The State of AI Presentation Tools in 2026

That's it for today!

See you soon,

Rowan, Joey, Zach, Shubham, and Jennifer — the humans behind The Rundown