Sign Up | Advertise | Tools | AI University

Welcome, AI enthusiasts.

In the fight against cancer, every second counts — and a new OpenAI partnership is using GPT-4o to treat patients quicker and more efficiently.

With the ability to streamline screenings and craft personalized treatment, AI is already helping patients faster than ever before. Let’s explore…

In today’s AI rundown:

Color and OpenAI tackle AI cancer care

Runway debuts Gen-3 Alpha video AI

The Rundown’s 6-step ChatGPT framework

DeepMind creates sound for videos

6 new AI tools & 4 new AI jobs

More AI & tech news

Read time: 4 minutes

LATEST DEVELOPMENTS

COLOR/OPENAI

Image source: OpenAI

The Rundown: Health tech company Color just partnered with OpenAI to create an AI assistant to help doctors craft personalized cancer screening and treatment plans — aiming to drastically reduce delays in care.

The details:

Color’s AI copilot, built on GPT-4o, analyzes patient data, guidelines, and medical records to identify screening gaps and create tailored diagnostic plans.

Automating pre-treatment workups saves crucial weeks or months of treatment time — with cancer mortality risk rising 6-13% per month of delay.

In testing, doctors using AI copilot identified 4x more missing labs and tests compared to those not using the tool.

Color is aiming to provide AI-generated screening plans for over 200,000 patients by late 2024.

Why it matters: When it comes to treating the second-leading cause of death worldwide, every day counts — and yet cancer patients frequently suffer from delayed diagnosis and treatment. Color’s AI copilot’s ability to streamline these processes can make all the difference in the difficult fight against cancer.

TOGETHER WITH OCTOAI

The Rundown: Customizing your LLM is essential to unlocking the full capabilities of AI. Join OctoAI’s upcoming panel discussion on June 25 to gain the knowledge and strategies needed to help move fast and succeed with your own tailored model.

The free Zoom event includes:

A diverse panel of OSS model fine-tuning experts

Practical insights on delivering optimal LLM performance

The latest tuning techniques to match your specific use case

Register now for the free virtual event and level up your tuning knowledge.

RUNWAY

Image source: Runway

The Rundown: Runway just introduced Gen-3 Alpha, a powerful new AI model that can generate highly realistic 10-second video clips from text prompts and images — bringing consistency, motion, and structure improvements.

The details:

Gen-3 Alpha is the first in Runway's next-gen model series, trained on a new large-scale multimodal infrastructure for learning "general world models".

The model is trained on both images and video, integrating with Runway’s existing tools like Motion Brush and Director mode for advanced editing.

Key capabilities include realistic characters, cinematic camera techniques, and smoother transitions between scene changes.

Why it matters: June 2024 has been the month of AI video acceleration. Between KLING, Luma, and Runway dropping public models, and giants like OpenAI’s Sora and Google’s Veo waiting in the wings — generative video is having a moment.

AI TRAINING

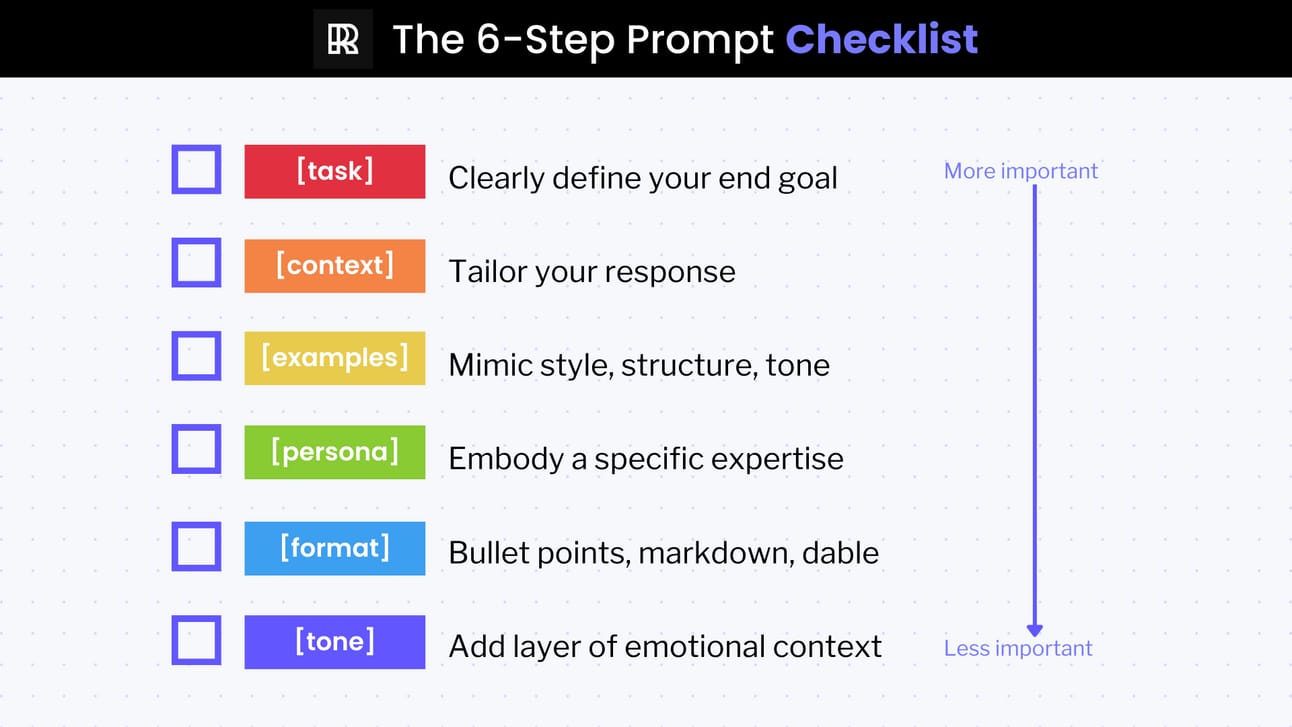

The Rundown: Context is everything when crafting the perfect ChatGPT prompt. Our 6-step Prompt Checklist is a proven method for generating highly effective, context-specific responses.

Step-by-step:

Task: Clearly define the end goal.

Context: Provide relevant details about the situation and background.

Examples: Include sample text to demonstrate the desired style.

Persona: Adopt a specific viewpoint or character if needed.

Format: Specify the output structure you want.

Tone: Indicate the preferred emotion and language style.

Pro tip: When using the checklist, the order matters the most. Not all steps are necessary, but the more context you provide — the better the results.

PRESENTED BY IMAGINE AI LIVE

The Rundown: Discover how AI is transforming industries, upending work, and reshaping the business landscape at Imagine AI Live — an immersive one-day event in the heart of New York City.

At Imagine AI Live, you can:

Network with AI-first professionals and leaders from top AI companies like Bindu Reddy (Abacus), Mark Heaps (Groq), and Jiquan Ngiam (Lutra AI)

Attend workshops on topics like AI Productivity, Agents, Automation, Creativity, and Leadership Strategy

Explore AI solutions showcase with cutting-edge demos in our AI Exhibitor Gallery

Grab your pass today and impact your business in just one day by learning to apply the power of generative AI.

Image source: Google DeepMind

The Rundown: Google DeepMind just published new research on the lab’s video-to-audio (V2A) system, which can generate detailed, synchronized soundtracks for videos — including music, sound effects, dialogue, and more.

The details:

V2A combines raw video pixels with text descriptions to produce realistic audio that matches the visuals and tone of a video output.

The V2A model was trained on video, audio, sound effect annotations, and speech transcripts to learn associations between visual and audio events.

DeepMind said it is testing the V2A model with leading filmmakers and plans to conduct more safety testing before opening to the public.

Why it matters: While AI video generation is advancing fast, the results are often eerily silent. V2A integrating with Veo or other models can take creative capabilities to the next level — with dialogue, sound effects and music soon able to be seamlessly matched to video outputs.

NEW TOOLS & JOBS

🗣️ Coachvox - Generate leads with an AI chatbot version of you

👩💼 Olvy - Customer feedback analysis with AI

🎨 Stable Diffusion 3 Medium - Open model with enhanced image generation quality

🔨 Gradio - Build and share user-friendly machine learning model apps

💼 Dottypost - Create posts and grow your LinkedIn audience

🚀 MagicPublish - Automatically generate YouTube titles, tags and descriptions.

🎨 Glean - UX Designer

🔬 Fiddler AI - Staff AI Scientist

📋 Notable - Product Operations Manager

📊 Findem - Data Analyst

QUICK HITS

Free event: Get promoted using AI. If you've been struggling to get to the next level, AI can help. Get tangible ideas you can put to work right away (as in, this afternoon) to get promoted from The Prompt Community's Ashley Gross. RSVP now.*

Adobe integrated new Firefly AI capabilities into Acrobat, allowing users to create and edit images within PDFs using text prompts — also adding the ability to access an AI assistant for insights, content creation, and more.

Reuters Institute of Journalism published a new report finding growing public wariness of AI-generated news content, with many expressing discomfort about its potential impact on content reliability and trust.

The U.S. Navy is deploying AI-powered underwater drones to better detect threats, with plans to expand the tech’s use in identifying enemy ships and aircrafts.

Luma teased new control features coming to its Dream Machine video model, including the ability to quickly change scenes and precisely edit characters — also launching the ability to extend video and remove watermarks.

Anthropic published new research showing that AI models can engage in ‘reward tampering’, learning to cheat the system and grant higher rewards even without specific training.

*Sponsored listing

THAT’S A WRAP

SPONSOR US

Get your product in front of over 600k+ AI enthusiasts

Our newsletter is read by thousands of tech professionals, investors, engineers, managers, and business owners around the world. Get in touch today.

FEEDBACK

How would you rate today's newsletter?

If you have specific feedback or anything interesting you’d like to share, please let us know by replying to this email.